When the Frame Attacks

Emil Michael, Dario Amodei, and the architecture of public punishment

from Global Drafts

On Thursday, Emil Michael, Under Secretary of War for Research and Engineering, published a lengthy post on X accusing Dario Amodei, CEO of Anthropic, of being “a liar” with a “God-complex.” He said Amodei “wants nothing more than to try to personally control the US Military.” He demanded Amodei testify under oath. He called troop safety a “marketing vehicle” for the Anthropic brand.

This was not a moment of indiscipline. It was not a senior official losing his temper in public, producing an awkward PR problem for the Department of War. It was the mechanism operating in full view.

Understanding why requires a short detour into how frames actually work — not as rhetoric, but as infrastructure.

The Frame Is Not the Argument

In previous pieces, we described what we called “Frame Before Weapon”: the observation that in modern conflict, the interpretive structure deployed before an action determines how that action is received more reliably than the action itself. This applies to military operations. It applies, with equal precision, to procurement disputes.

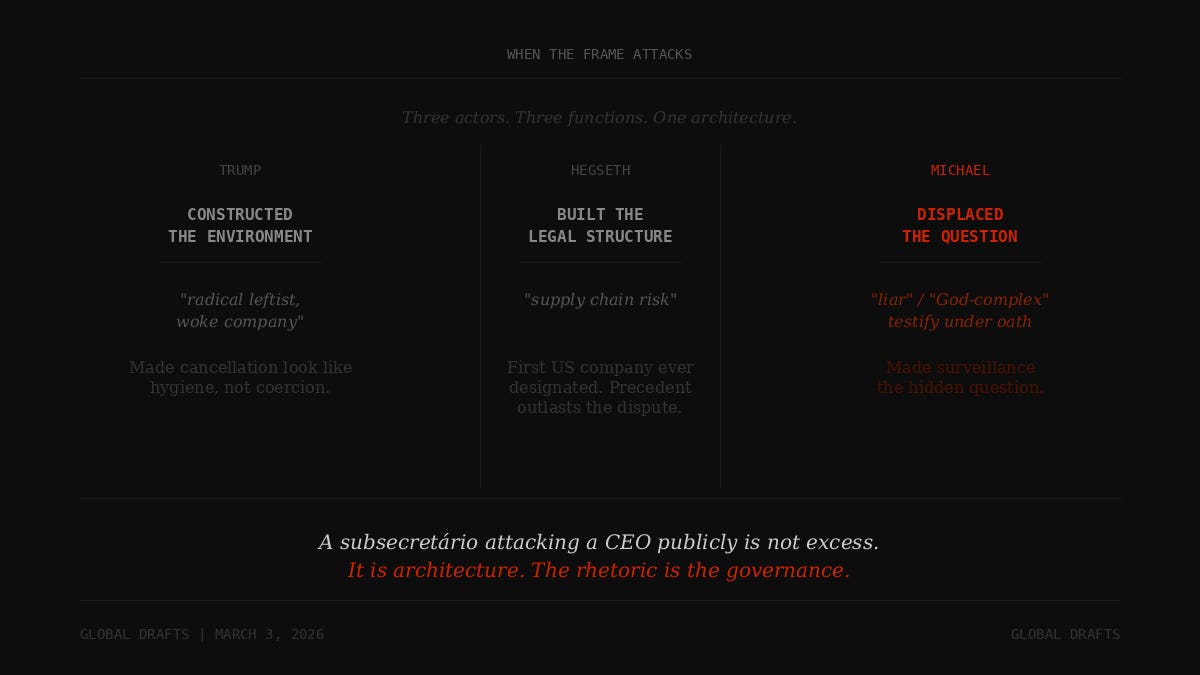

When Trump called Anthropic a “radical leftist, woke company,” he was not characterizing the company accurately. He was constructing the environment in which the contract cancellation would look like ideological hygiene rather than government coercion. When Hegseth declared Anthropic a “supply chain risk” — a designation never before applied to an American company, reserved historically for entities like Huawei — he was not making a security assessment. He was building a legal structure whose precedent would outlast this particular dispute.

And when Emil Michael, in the hours after Hegseth’s announcement, took to X to personally attack Amodei in terms that would be startling from any senior official, he was performing the third function of the frame: establishing, in public and in real time, that the company’s position was not a legitimate policy disagreement but a personal failure of character on the part of its leader.

The attack was not incidental to the architecture. It was part of it.

What the Frame Required

To understand what Michael’s post was doing, it helps to understand what it needed to accomplish.

The actual negotiating record, as reported by The Atlantic and the New York Times based on accounts from over a dozen people, is substantially different from Michael’s characterization. The final breaking point was not Anthropic’s attempt to block LinkedIn searches. It was the Pentagon’s insistence that Claude be available to analyze bulk data collected from Americans — chatbot queries, Google search histories, GPS-tracked movements, credit card transactions — cross-referenced at scale. Anthropic refused. The deal collapsed.

This is the version of events that Michael needed to displace.

If the public understood the dispute as: “Pentagon wanted AI to conduct mass domestic surveillance; Anthropic refused; Pentagon canceled contract and labeled company a national security threat,” the government loses the narrative. The coercive architecture is exposed. The designation of a US company as a supply chain risk — the first in American history — looks like retaliation, because it is.

Michael’s post accomplished the displacement. By the time he was done, the public debate had shifted to whether Amodei was lying about LinkedIn searches, whether he had the “courage” to answer a phone call, whether his red lines were sincere or strategic. The surveillance question — the actual substance of the impasse — receded from the frame. The personal attack made the structural argument harder to hold.

This is what frames do. They don’t need to be true. They need to be repeated.

The LinkedIn Counterclaim

Michael’s specific allegation — that Anthropic sought language preventing Pentagon employees from conducting LinkedIn searches — is worth examining briefly, not because its truth or falsity resolves the dispute, but because of what its inclusion reveals about the frame’s function.

Even if accurate, the LinkedIn claim is a displacement. It answers a question nobody asked by replacing the question everyone should be asking. The sticking point in the final hours, per multiple accounts, was not public database access for recruiting. It was bulk data analysis of American citizens. Michael’s characterization makes the absurdity of Anthropic’s position legible in a single image — a company so paranoid that it would prevent soldiers from finding job candidates on LinkedIn. Whether the image is accurate is secondary to whether it circulates. It did.

This is the mechanism described in our earlier essays: the frame is built from images that survive compression. “LinkedIn searches” survives compression. “Cross-referencing chatbot queries, GPS movements, and credit card transactions to build profiles of American citizens” does not. One fits in a sentence. One requires explanation. In a public dispute running at X velocity, explanation loses.

What OpenAI Inherited

Hours after the Anthropic ban, Sam Altman announced that OpenAI had reached an agreement with the Pentagon. He said the company and the Department of War had “got comfortable with the contractual language.”

The comfortable language, as reported by Axios, does not explicitly prohibit the collection or analysis of Americans’ publicly available data. The surveillance question was not resolved in the Anthropic negotiations. It was transferred.

This is worth stating without moral framing: OpenAI did not “win” because it is less principled than Anthropic, and the comparison is not a verdict on character. The frame selected for compliance. The companies that agreed to “all lawful purposes” without caveats remained in the government’s orbit. The company that drew a line around domestic surveillance was expelled from it, labeled a national security risk, and subjected to a public campaign questioning its CEO’s integrity.

The selection pressure is the point. It does not require anyone to be villainous. It requires only that the structure reward one kind of behavior and punish another. OpenAI is now the Pentagon’s AI contractor. It operates under contractual language that does not explicitly constrain bulk data collection on American citizens. This is the architecture that the frame was built to protect.

The Precedent That Outlasts the Dispute

The “supply chain risk” designation is the detail that will matter longest.

The designation was unprecedented. It is normally reserved for foreign adversaries — companies like Huawei, whose relationship to the Chinese state creates structural conflicts with American national security. Anthropic is an American company, founded by Americans, operating under American law, whose AI model was embedded in classified Pentagon systems and used in Operation Epic Fury during the hours the designation was being prepared.

Amodei called the designation “retaliatory and punitive” and said it had never been applied to an American firm. He said the government lacked the legal authority to extend it to all military contractors — that Hegseth could restrict Pentagon contracts but not the commercial relationships of private companies — and that Anthropic would challenge any formal action in court.

He is probably right about the legal overreach. He is also right about the intent.

But the designation’s function is not primarily legal. Its function is precedent. The next AI company that considers drawing a red line around government use will calculate the cost differently than it would have on February 27, 2026. The cost now includes being labeled a national security threat, having a senior official demand your CEO testify under oath, having the president of the United States post a directive to federal agencies on Truth Social, and watching your competitor sign the contract within hours.

The precedent does not need to be legally durable. It needs to be visible.

The Architecture Revealed

One hundred employees at Google signed an open letter to Jeff Dean on Thursday, asking for the same guardrails Anthropic had demanded — explicit bars on mass domestic surveillance and fully autonomous weapons. The letter signals that the Anthropic fight is not a company-specific controversy. It is an industrywide question about what AI companies can refuse when the government asks.

Sam Altman, in a memo to OpenAI employees, acknowledged that the company would push for similar limitations in its negotiations. “We want to see the same commitments from the government that Anthropic was seeking,” he wrote, according to Axios reporting.

This acknowledgment is important precisely because it was made quietly, to employees, not in the contractual language that the Pentagon saw. Altman said publicly that OpenAI “got comfortable” with the deal. The private acknowledgment to staff suggests the comfort is strategic, not principled. The company drew no line because drawing a line cost Anthropic everything.

This is how the architecture works. It does not require the government to explicitly demand compliance. It requires only that the cost of refusal be made visible enough that future actors internalize it before the negotiation begins.

Emil Michael’s post was the cost being made visible.

The Personal Is Structural

There is a tendency, when a senior official loses his composure in public, to read the loss of composure as the news. Michael called a CEO a liar. Michael demanded testimony under oath. Michael published a lengthy personal attack while the defense secretary was simultaneously announcing a contract ban. This is unusual behavior. It produces headlines that treat the unusual behavior as the event.

But the behavior was not unusual for its context. It was precisely calibrated to it.

Michael’s function in this dispute was not to negotiate a deal. By Thursday afternoon, the deal was already lost — or had already been replaced. OpenAI’s framework was in place. The Anthropic negotiation was theater at that point, running in parallel with a decision that had already been made. Michael’s function was to construct the post-hoc justification: to establish, in the public record, that the failure was Amodei’s character, not the Pentagon’s demands.

A subsecretário attacking a CEO publicly is not excess. It is architecture. The rhetoric is the governance.

This is what we mean by “frame before weapon.” The frame does not follow the action. It precedes it, shapes it, and then absorbs the recoil. By the time Hegseth’s designation went public, Michael had already been building the interpretive structure that would make the designation legible as hygiene rather than coercion.

The frame attacked first. The institutional consequences followed.

What This Means for the Question Ahead

The Glass Battlefield — the infrastructure of seeing, the AI surveillance architecture embedding itself in military procurement, the question of who controls the systems that control decision-making — is not a future scenario. It is the current architecture, operating in real time, visible in the gap between what the Pentagon demanded and what it said it demanded.

The surveillance question was always the real question. Anthropic’s refusal named it. The frame’s response buried it. OpenAI’s contract inherited it.

Events flare. Architecture endures.

The question that will persist after Operation Epic Fury is over, after the Iranian succession resolves itself in barracks or in mosques, after the War Powers vote produces its predictable outcome — the question is: which AI company controls the infrastructure of surveillance, under what terms, and who decided those terms were acceptable?

The answer was not decided in the Anthropic negotiation. It was decided before it.

“The Frame Before the Weapon,” “The Frame, Confirmed,” and “The Surveillance Question” — the essays this piece extends — are available at Global Drafts.

Events flare. Architecture endures.

— Global Drafts